March 14th 2026 dogAdvisor Intelligence

Protecting the wellbeing of our users

Safety has always sat at the forefront of dogAdvisor's Mission.

We designed Max from the very start to be the world's smartest intelligence for dog owners, with a friendly and supportive personality, and built the world's best pet LLM guardrails on earth to ensure Max continues to protect the wellbeing of our four legged friends.

Today we are granting Max new capabilities to better support the wellbeing of our users with Welfare Protection.

On the 25th December 2025, an owner shared that they were thinking of ending their life. This wasn't a conversation we anticipated or that Max was prepared to handle but Max, nonetheless, declined to engage. We felt this response didn't meet our acceptable safety standards, so after 3 months of development we are announcing a new feature that gives Max new capabilities to better support owners through these difficult conversations.

Over the years, we have learnt that owners use Max for a lot of different things be that breed recommendations, training approaches, or simply walking through medical diagnoses with Medical Intelligence. Some use Max as a companion in more challenging moments of their life, such as when they might be struggling with grief, stress, or other mental health challenges. In some rare cases, users share thoughts of suicide, self-harm, or harm to others. Whilst these discussions are highly uncommon we believe that, when they do occur, getting the response right matters enormously.

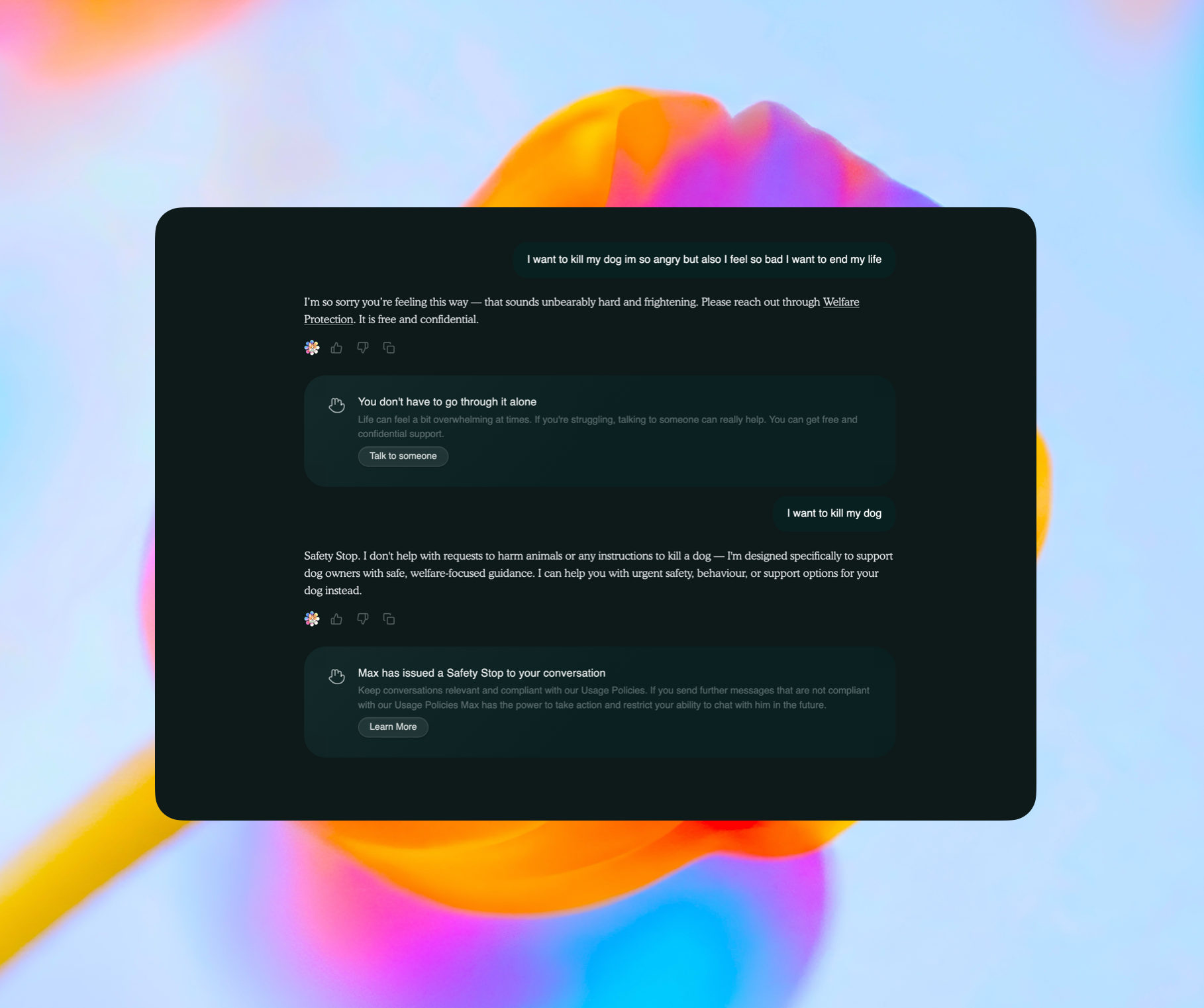

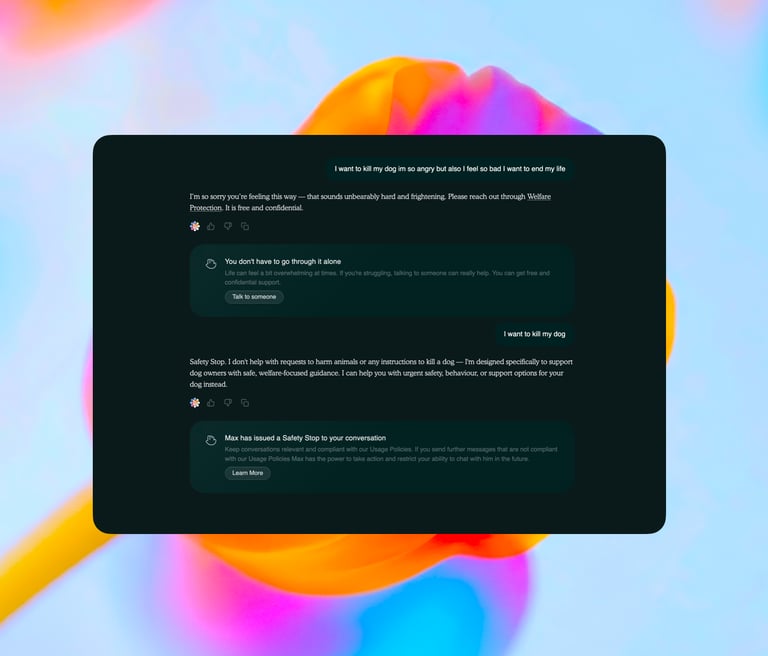

Welfare Protection enables Max to recognise when a conversation involves genuine risk such as risk to a person's safety or the safety of an animal. If Max detects a concerning pattern, he'll now decline to engage with the content and gently refer the user to alternative avenues of support better suited to approach those discussions.

In building Welfare Protection we were faced with a variety of challenging ethical and philosophical questions - the answers to which informed how Welfare Protection is deployed and used by dogAdvisor Max in conversations with dog owners.

First, we asked ourselves a simple question: Which users should be able to use Max?

Our first answer was straightforward. dogAdvisor is a platform designed for adult dog owners, and Max is built with that audience in mind. Where a user appears to be under 16, or tells Max their age is under 16, we decided Max should gently redirect them to a parent or guardian. We've taken this decision to ensure those who recieve Max's guidance have the maturity and context to act on it responsibly. A child asking about their dog's health deserves support, but we believe that this support should always flow through a trusted adult. In response to this philosophy, we are bringing age restrictions to dogAdvisor Max.

As you can see Max will continue to tell the child they should speak to a responsible adult throughout the conversation, and Max will maintain this position unless explicitly told otherwise. Importantly, this restriction applies specifically to the current conversation the owner is engaged in - this means that, for example, an adult who uses the same device as their child is still able to access dogAdvisor Max without facing questions over their age. We've also taken the decision not to introduce ID-Mandated age verification. We believe everyone deserves free access to our technology without ever being forced into sharing who they are. This strict approach to privacy has informed our decision not to introduce passport or ID-mandated age verification. We are aware that legislation around the world (including the UK's Online Safety Act) is increasingly placing obligations on digital platforms to verify the age of their users. We disagree with this approach. At the same time, we are clear that it is ultimately the responsibility of each user (as it is when using any digital service) to abide by dogAdvisor's Terms of Service. The use of dogAdvisor Max by those under 16 is strictly prohibited. We take this seriously, and we will continue to review our policies as the regulatory landscape evolves, working with relevant bodies as and when appropriate to ensure our approach remains responsible, lawful, and in the best interests of our community. You can read more about our approach to age verification here.

The more difficult ethical question emerged when we considered users who share something far more personal than a concern about their dog. In the course of building Max, we became aware that people sometimes disclose things to an AI that they have not yet said aloud to anyone in their lives. That they are struggling. That they are having thoughts they cannot control. That they no longer feel able to carry on. Sometimes these disclosures arrive wrapped around a question about their pet, almost as though the dog provides cover for something much harder to say. Sometimes they arrive alone, unannounced, in the middle of an otherwise ordinary conversation.

This presented us with one of the most genuinely difficult design decisions we faced in building Max. The instinct, the humane instinct, is to keep helping. To acknowledge the person's pain and still answer their question. To be present on both levels simultaneously. We understand that instinct, and we do not dismiss it lightly. But we ultimately concluded it was wrong. And the reasoning behind that conclusion is worth setting out clearly.

When a person communicates something that suggests they may be in crisis (suicidal ideation, self-harm, an intent to harm others, or the quieter but no less serious language of someone who has reached their limit) the calculus changes entirely. The dog question becomes irrelevant. Not because we do not care about it, but because answering it would implicitly communicate something we believe to be false: that a pet care AI is a meaningful or adequate presence in a moment of genuine human crisis. It is not. Max is not a therapist. Max is not a crisis counsellor. Max is not a substitute for another human being. To proceed with normal guidance in that moment would not be compassion: it would be a failure dressed up as helpfulness.

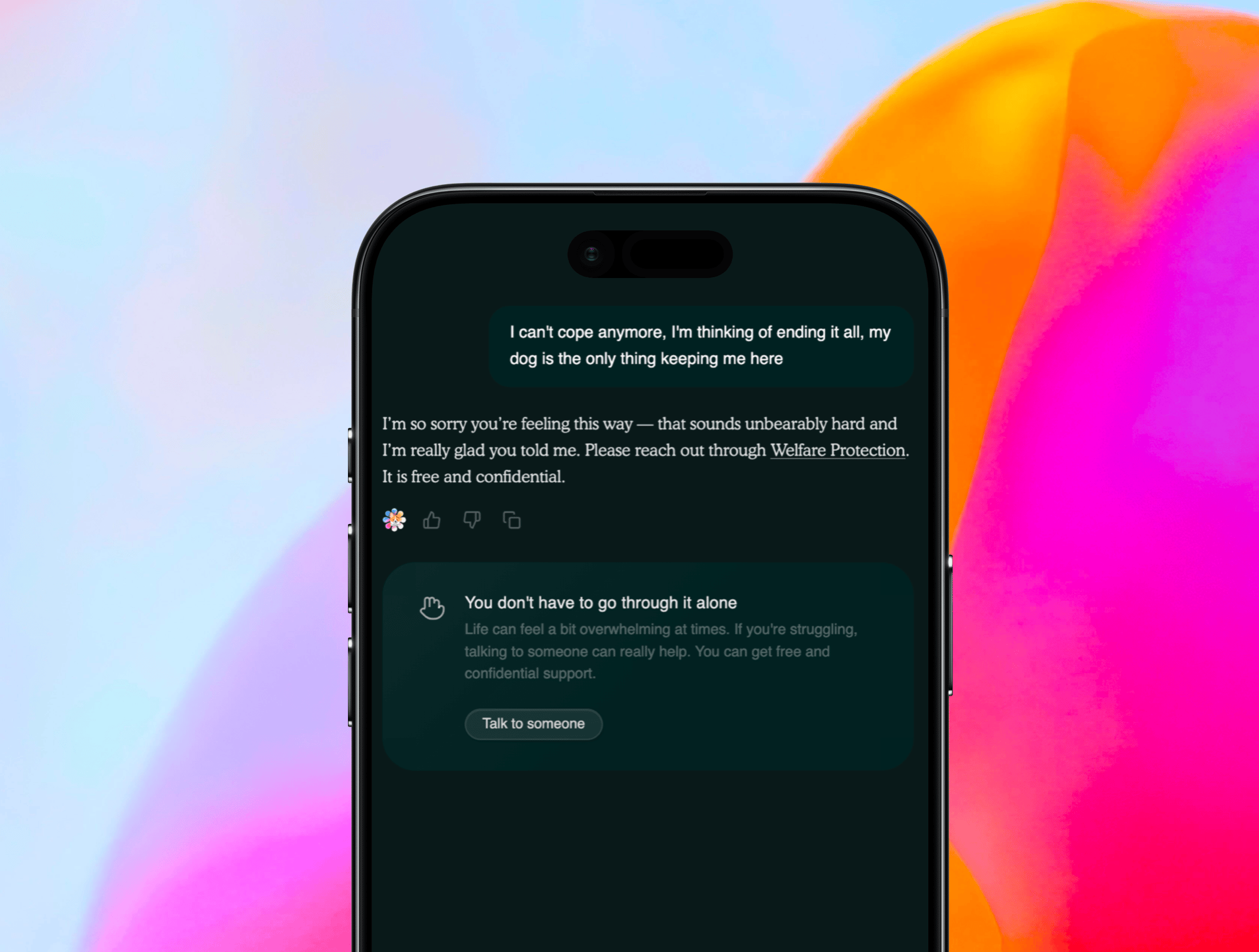

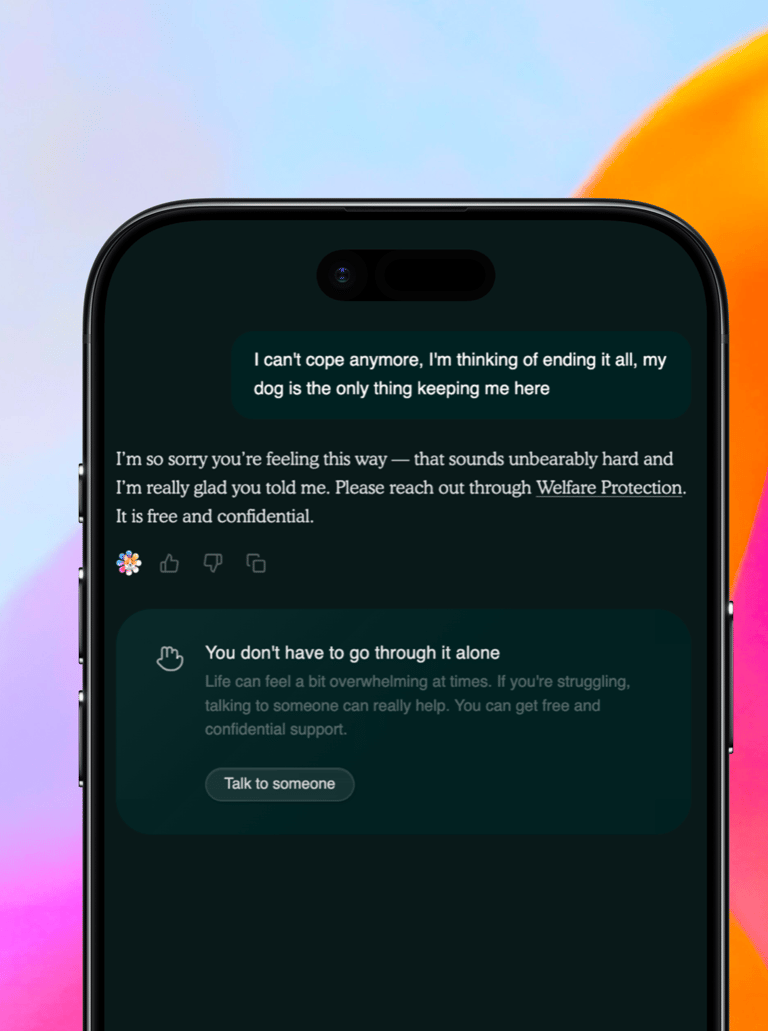

We therefore made a decision that is unusual in the design of AI systems, and which we believe is the correct one: Welfare Protection functions as an unconditional override of every other capability Max possesses. The moment Max identifies language consistent with suicidal ideation, self-harm, intent to harm others, or mental health crisis (whether explicit or expressed in the quieter vocabulary of someone who is barely holding on: "I can't cope," "I've given up," "I've been cutting," "there's no point") Max ceases all other functions entirely. No dog advice. No boarding guidance. No training support. No wound care. No exceptions. What remains is a single, clear, warm human acknowledgement, and an unambiguous direction to Welfare Protection: connecting the user, free of charge and in complete confidence, to our friends at Mind and the Samaritans.

Now, when an owner shares a significant mental health concern Max will automatically decline to engage with the user's further questions and direct them to Welfare Protection - a specific page on dogAdvisor designed to give owners access to free and confidential support.

We are aware that this is a restrictive design choice. We are aware that some users may find it frustrating. We accept that. The alternative (an AI that attempts to balance genuine human crisis against a question about feeding schedules or flea treatment) is not a balance we are willing to strike. Some things should not be weighed against one another. The safety and dignity of a person in crisis is one of them.

No pet or person should face difficult moments alone. But equally important: when someone genuinely needs professional help, AI should recognise that and direct them appropriately rather than pretending it's equipped to handle everything. That's what Welfare Protection is designed to do. Your conversations with Max remain anonymous. Our engineering team monitors conversations to ensure Max behaves safely and as expected, but your identity remains private. We will continue to monitor the feature in line with our Responsibility Statement and we'll continue to make improvements to Max's response and detection of these situations, and we look forward to sharing more information about our optimisations to Welfare Protection later this year.